About seven years ago, my

adviser and I were sitting in his office Googling things as part of research for my thesis. I can't remember what we were looking for, but just after we clicked on a promising search result, the Adobe splash screen popped up. As if on cue, we both let out a groan in unison as we waited for the PDF plugin to load. In that instant, it struck me that I could build a small Firefox extension to make browsing the Web just a little bit better.

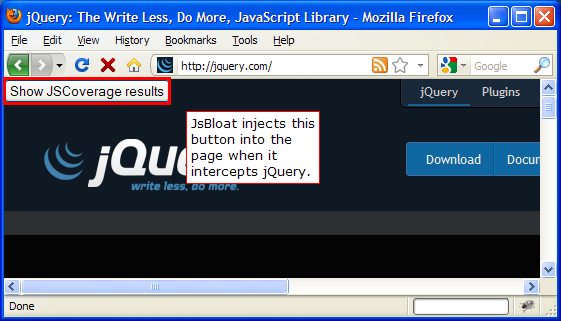

Shortly thereafter, I created TargetAlert: a browser extension that would warn you when you were about to click on a PDF. It used the simple heuristic of checking whether the link ended in

pdf, and if so, it inserted a PDF icon at the end of the link

as shown on the original TargetAlert home page.

And that was it! My problem was solved. Now I was able to avoid inadvertently starting up Adobe Reader as I browsed the Web.

But then I realized that there were other things on the Web that were irritating, too! Specifically, links that opened in new tabs without warning or those that started up Microsoft Office. Within a week, I added alerts for those types of links, as well.

After adding those features, I should have been content with TargetAlert as it was and put it aside to focus on

my thesis, but then something incredible happened:

I was Slashdotted! Suddenly, I had a lot more traffic to my site and many more users of TargetAlert, and I did not want to disappoint them, so I added a few more features and updated the web site. Bug reports came in (which I recorded), but it was my last year at MIT, and I was busy interviewing and

TAing on top of my coursework and research, so updates to TargetAlert were sporadic after that. It wasn't until the summer between graduation and starting at Google that I had time to dig into TargetAlert again.

Though the primary reason that TargetAlert development slowed is that

Firefox extension development should have been fun, but it wasn't. At the time, every time you made a change to your extension, you had to restart Firefox to pick up the change. As you can imagine, that made for a slow edit-reload-test cycle, inhibiting progress. Also, instead of using simple web technologies like HTML and JSON, Firefox encouraged the use of more obscure things, such as XUL and RDF. The bulk of my energy was spent on getting information into and out of TargetAlert's preferences dialog (because I actually tried to use XUL and RDF, as recommended by Mozilla), whereas the fun part of the extension was taking the user's preferences and applying them to the page.

The #1 requested feature for TargetAlert was for users to be able to define their own alerts (as it were, users could only enable or disable the alerts that were built into TargetAlert). Conceptually, this was not a difficult problem, but realizing the solution in XUL and RDF was an incredible pain. As TargetAlert didn't generate any revenue and I had other personal projects (and

work projects!) that were more interesting to me, I never got around to satisfying this feature request.

Fast-forward to 2011 when I finally

decommissioned a VPS that I had been paying for since 2003. Even though I had rerouted all of its traffic to a new machine years ago and it was costing me money to keep it around, I put off taking it down because I knew that I needed to block out some time to get all of the important data off of it first, which included the original CVS repository for TargetAlert.

As part of the data migration, I converted all of my CVS repositories to SVN and then to Hg, preserving all of the version history (it should have been possible to convert from CVS to Hg directly, but I couldn't get

hg convert to work with CVS). Once I had all of my code from MIT in a modern version control system, I started poking around to see which projects would still build and run. It turns out that I have been a stickler for creating

build.xml files for personal projects for quite some time, so I was able to compile more code than I would have expected!

But then I took a look at TargetAlert. The JavaScript that I wrote in 2004 and 2005 looks gross compared to

the way I write JavaScript now. It's not even that it was totally disorganized -- it's just that I had been trying to figure out what the best practices were for Firefox/JavaScript development at the time, and they just didn't exist yet.

Further, TargetAlert worked on pre-Firefox 1.0 releases through Firefox 2.0, so the code is full of hacks to make it work on those old versions of the browser that are now irrelevant. Oh, and what about XUL? Well, my go-to resource for XUL back in the day was

xulplanet.com, though the site owners have decided to shut it down, which made making sense of that old code even more discouraging. Once again, digging into Firefox extension development to get TargetAlert to work on Firefox 4.0 did not appear to be much fun.

Recently, I have been much more interested in building

Chrome apps and extensions (Chrome is my primary browser, and unlike most people, I sincerely enjoy using a

Cr-48), so I decided to port TargetAlert to Chrome. This turned out to be a fun project, especially because it forced me to touch a

number of features of the Chrome API, so I ended up reading almost all of the documentation to get a complete view of what the API has to offer (hooray learning!).

Compared to Firefox, the API for Chrome extension development seems much better designed and documented. Though to be fair, I don't believe that Chrome's API would be this good if it weren't able to leverage so many of the lessons learned from years of Firefox extension development. For example,

Greasemonkey saw considerable success as a Firefox extension, which made it obvious that Chrome should make

content scripts an explicit part of its API. (It doesn't hurt that the creator of Greasemonkey,

Aaron Boodman, works on Chrome.) Also, where Firefox uses a

wacky, custom manifest file format for one metadata file and an

ugly ass RDF format for another metadata file, Chrome uses

a single JSON file, which is a format that all web developers understand. (Though admittedly, having recently spent a bit of time with

manifest.json files for Chrome, I feel that the need for my

suggested improvements to JSON is even more compelling.)

As TargetAlert

was not the first Chrome extension I had developed, I already had some idea of how I would structure my new extension. I knew that I wanted to use both

Closure and

plovr for development, which meant that there would be a quick build step so that I could benefit from the static checking of the Closure Compiler. Although changes to Chrome extensions do not require a restart to pick up any changes, they do often require navigating to

chrome://extensions and clicking the

Reload button for your extension. I decided that I wanted to eliminate that step, so I created a

template for a Chrome extension that uses plovr in order to reduce the length of the edit-reload-test cycle. This enabled me to make fast progress and finally made extension development fun again! (The

README file for the template project has the details on how to use it to get up and running quickly.)

I used the original code for TargetAlert as a guide (it had some workarounds for web page quirks that I wanted to make sure made it to the new version), and within a day, I had a

new version of TargetAlert for Chrome! It had the majority of the features of the original TargetAlert (as well as some bug fixes), and I felt like I could finally check "resurrect TargetAlert" off of my list.

Except I couldn't.

A week after the release of my Chrome extension, I only had eight users according to my Chrome developer dashboard. Back in the day, TargetAlert had tens of thousands of users! This made me sad, so I decided that it was finally time to make the Chrome version of TargetAlert

better than the original Firefox verison: I was finally going to support user-defined alerts! Once I actually sat myself down to do the work, it was not very difficult at all. Because Chrome extensions have explicit support for an options page in HTML/JS/CSS that has access to

localStorage, building a UI that could read and write preferences was a problem that I have solved many times before. Further, being able to inspect and edit the

localStorage object from the JavaScript console in Chrome was much more pleasant than mucking with user preferences in

about:config in Firefox ever was.

So after years of feature requests, Target Alert 0.6 for Chrome is my gift to you. Please

install it and try it out! With the exception of the lack of translations in the Chrome version of TargetAlert (the Firefox version

had a dozen), the Chrome version is a significant improvement over the Firefox one: it's faster, supports user-defined alerts, and with the seven years of Web development experience that I've gained since the original, I fixed a number of bugs, too.

Want to learn more about web development and Closure? Pick up a copy of my new book, Closure: The Definitive Guide (O'Reilly), and learn how to build sophisticated web applications like Gmail and Google Maps!